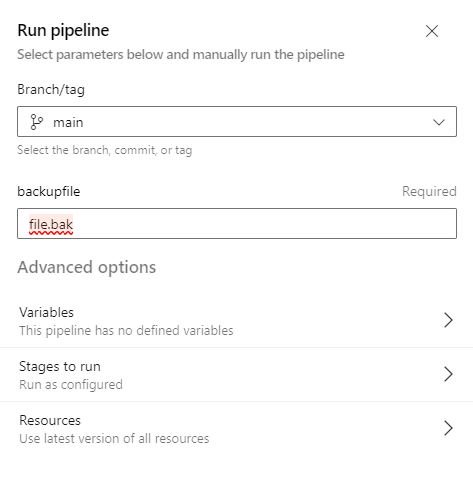

Imagine that you want to enable diagnostic settings for multiple app services on Azure using terraform. The required options can be located under Monitoring tab.

A appropriate rule option should be created to indicate where the logs should be sent.

The available categories can be located below and I will instruct terraform to enable them all.

In order to accomplish that through terraform I used a loop. The depends_on keyword is used because firstly the app services should be created and then the diagnostic settings for them. Create a file like app_diagnostics.tf and place it inside your terraform working directory.

resource "azurerm_monitor_diagnostic_setting" "diag_settings_app" {

depends_on = [ azurerm_windows_web_app.app_service1,azurerm_windows_web_app.app_service2 ]

count = length(local.app_service_ids)

name = "diag-rule"

target_resource_id = local.app_service_ids[count.index]

log_analytics_workspace_id = local.log_analytics_workspace_id

dynamic "log" {

iterator = entry

for_each = local.log_analytics_log_categories

content {

category = entry.value

enabled = true

retention_policy {

enabled = false

}

}

}

metric {

category = "AllMetrics"

retention_policy {

enabled = false

days = 30

}

}

}

Inside locals.tf I have created a variable that holds the app services ids, the log analytics workspace ID on which the logs will be sent and also the categories which I want to enable on Diagnostics. As shown on the first screenshot all the categories are selected.

locals {

log_analytics_workspace_id = "/subscriptions/.../geralexgr-logs"

log_analytics_log_categories = ["AppServiceHTTPLogs", "AppServiceConsoleLogs","AppServiceAppLogs","AppServiceAuditLogs","AppServiceIPSecAuditLogs","AppServicePlatformLogs"]

app_service_ids = [azurerm_windows_web_app.app_service1.id,azurerm_windows_web_app.app_service2.id]

}

As a result the loop will enable for every app service you add on app_service_ids each Diagnostic category placed on log_analytics_log_categories variable.