Recently I faced the below error when I tried to use some variables which I initialized on a ansible vault file.

My code is shown below. It just prints some values retrieved from a vault.

ansible-playbook vault.yml --vault-password-file=vault_key

While deploying the playbook the below error appears:

ERROR! variable files must contain either a dictionary of variables, or a list of dictionaries. Got: user_password:password database_password:password ( <class ‘ansible.parsing.yaml.objects.AnsibleUnicode’>)

Dictionary file

In order to resolve issue, you should just leave a blank between dictionary key and value.

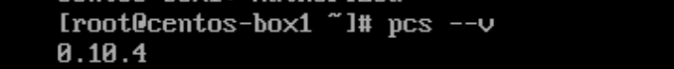

Deploy again your playbook and the result will be successful.