When working with terraform loops you may encounter the error that is shown below.

The “for_each” value depends on resource attributes that cannot be determined until apply, so Terraform cannot predict how many instances will be created.

I faced this particular issue when I tried to dynamically create a azurerm_monitor_diagnostic_setting resource for multiple web apps.

The for_each code is shown below:

resource "azurerm_monitor_diagnostic_setting" "diag_settings_app" {

depends_on = [ azurerm_windows_web_app.app_service1,azurerm_windows_web_app.app_service2 ]

for_each = toset(local.app_service_ids)

name = "diag-rule"

target_resource_id = each.value

log_analytics_workspace_id = local.log_analytics_workspace_id

dynamic "log" {

iterator = entry

for_each = local.log_analytics_log_categories

content {

category = entry.value

enabled = true

retention_policy {

enabled = false

}

}

}

metric {

category = "AllMetrics"

retention_policy {

enabled = false

days = 30

}

}

}

The local.app_service_ids defines the app services IDs.

app_service_ids = [azurerm_windows_web_app.app_service1.id,azurerm_windows_web_app.app_service2.id]

In order to override this issue I used count loop instead.

resource "azurerm_monitor_diagnostic_setting" "diag_settings_app" {

depends_on = [ azurerm_windows_web_app.app_service1,azurerm_windows_web_app.app_service2 ]

count = length(local.app_service_ids)

name = "diag-rule"

target_resource_id = local.app_service_ids[count.index]

log_analytics_workspace_id = local.log_analytics_workspace_id

dynamic "log" {

iterator = entry

for_each = local.log_analytics_log_categories

content {

category = entry.value

enabled = true

retention_policy {

enabled = false

}

}

}

metric {

category = "AllMetrics"

retention_policy {

enabled = false

days = 30

}

}

}

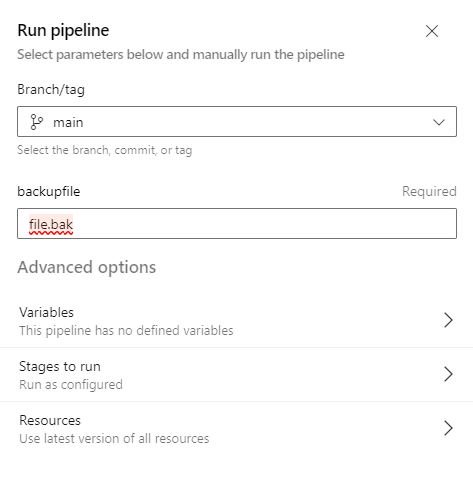

terraform apply will then work: